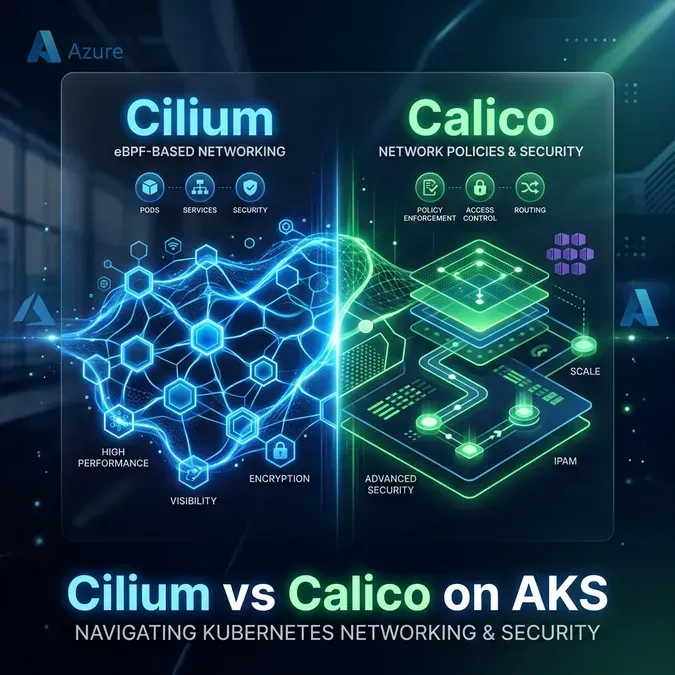

Cilium vs Calico on AKS: Which CNI Should You Actually Use?

AKS gives you two serious CNI options: Calico for network policy and Cilium for eBPF-native networking. Here's how they actually differ in production and which one fits your threat model.

AKS shipped Cilium support in 2023 and it's been GA ever since. Most teams still default to Calico because that's what the docs pointed to for years. I keep seeing production clusters on Calico that were set up in 2021, never revisited, and nobody's sure why they chose it.

If you are looking for advanced use cases such as Deploying LLMs on Kubernetes, your choice of CNI will significantly impact node-level observability and security. The question isn't which CNI is objectively better. The question is whether Calico is still the right default for a new AKS cluster in 2026 — and in most cases, I'd argue it isn't.

This post is for platform engineers who are either standing up a new AKS cluster or evaluating whether to migrate. I'll cover the architecture of both, where they actually differ, and give you a decision matrix that doesn't pretend the answer is always Cilium.

Why CNI Choice on AKS Is Different From Other K8s Distros

On a self-managed cluster or EKS, you pick your CNI and install it yourself. On AKS, Microsoft wraps CNI selection into the cluster creation API. That changes the tradeoffs significantly.

The default AKS networking mode is Azure CNI, which handles pod IP assignment from your VNet subnet — not the CNI plugin. Calico and Cilium on AKS sit on top of Azure CNI and handle network policy enforcement, not IP address management. This is an important distinction. Neither Calico nor Cilium owns the pod network on AKS in the same way they would on a self-managed cluster with kubeadm.

There's one exception: Azure CNI Powered by Cilium (what Microsoft markets as the Cilium integration). This gives Cilium control over both the dataplane and network policy, replacing the Azure NPM (Network Policy Manager) component entirely.

Why switching later is painful: CNI changes on AKS require node pool recreation. You can't drain and migrate pods in place; you're standing up new nodes, migrating workloads, and decommissioning the old pool. In a large cluster with stateful workloads, this is a multi-day project. Choose your CNI before your first production workload, not after.

Calico on AKS: What You're Actually Getting

When you create an AKS cluster with --network-policy calico, Microsoft installs:

- Calico's Felix agent on each node (enforces network policy via iptables/ipvs)

- Typha (for clusters > ~50 nodes — reduces load on the Kubernetes API by aggregating Felix updates)

- The standard

NetworkPolicyresource supported, plus Calico's own CRDs

What you're not getting is Calico's IPAM or BGP routing. Azure CNI still handles pod IPs. Calico is purely the network policy enforcement layer here.

Felix works by translating NetworkPolicy resources into iptables chains. Every policy rule becomes a set of iptables rules on every node in the cluster. This has a well-known scaling problem: iptables rule evaluation is O(n) — linear with the number of rules. At 100 services it's fine. At 1000 services, iptables chains can contain tens of thousands of rules, and every connection lookup traverses them sequentially.

What Calico Does Well on AKS

GlobalNetworkPolicy is genuinely useful. Kubernetes' built-in NetworkPolicy is namespace-scoped — you can't write a single policy that applies to all namespaces without duplicating it. Calico's GlobalNetworkPolicy CRD gives you cluster-wide rules. The canonical use case is a default-deny all egress policy applied to every workload namespace, with explicit allow rules per service.

1apiVersion: projectcalico.org/v3

2kind: GlobalNetworkPolicy

3metadata:

4 name: default-deny-egress

5spec:

6 selector: "projectcalico.org/namespace not in {'kube-system', 'monitoring'}"

7 types:

8 - Egress

9 egress:

10 # Allow DNS

11 - action: Allow

12 protocol: UDP

13 destination:

14 ports: [53]

15 # Allow egress to same namespace

16 - action: Allow

17 destination:

18 selector: "projectcalico.org/namespace == projectcalico.org/namespace"

19 # Deny everything else

20 - action: DenyCalico also supports WireGuard encryption for pod-to-pod traffic (encrypted in the kernel, minimal overhead), and IPIP/VXLAN overlays if you're not using Azure CNI's flat network.

Cilium on AKS: What You're Actually Getting

Cilium on AKS comes in two configurations:

- BYO CNI mode: Azure CNI handles IPs, Cilium handles policy. Similar to the Calico integration, but with Cilium's eBPF engine instead of iptables.

- Azure CNI Powered by Cilium: Microsoft-managed integration where Cilium replaces Azure NPM entirely and handles both dataplane and policy. This is the option you want if you're starting fresh.

The critical difference from Calico is the dataplane: Cilium uses eBPF programs attached to the Linux kernel's networking hooks, bypassing iptables entirely.

eBPF programs evaluate policy decisions using hash table lookups — O(1) regardless of how many policies exist. Adding your 1000th service doesn't degrade policy evaluation time. This isn't theoretical: production clusters with 10,000+ services and Cilium see consistent policy latency; the same cluster on iptables-based CNIs starts showing measurable latency spikes in the policy evaluation path.

Head-to-Head Comparison

| Feature | Calico (AKS) | Cilium (AKS) |

|---|---|---|

| Dataplane | iptables / ipvs | eBPF |

| Policy evaluation complexity | O(n) rules | O(1) hash lookup |

| Standard NetworkPolicy | ✅ | ✅ |

| Cluster-wide policies | ✅ GlobalNetworkPolicy | ✅ CiliumClusterwideNetworkPolicy |

| L7 (HTTP/gRPC) policy | ❌ | ✅ (via Envoy) |

| DNS-aware policy | ❌ | ✅ |

| Built-in observability | ❌ | ✅ Hubble |

| Runtime security | ❌ | ✅ Tetragon |

| WireGuard encryption | ✅ | ✅ |

| Windows node support | ✅ | ❌ (Linux only) |

| AKS managed option | ✅ | ✅ (Azure CNI Powered by Cilium) |

| Azure support tier | Microsoft supported | Microsoft supported (managed mode) |

| Resource overhead (DaemonSet) | ~100MB RAM | ~200MB RAM |

Performance: eBPF vs iptables at Scale

The performance argument for Cilium gets more compelling as your cluster grows. Here's the concrete mechanism.

When a pod sends a packet, the kernel checks iptables chains to decide if it's allowed. Each chain is a linked list of rules. For a cluster with 500 services and moderate network policy coverage, you might have 50,000+ iptables rules per node. Every new connection traverses this list.

Cilium replaces this with eBPF maps (essentially kernel hash tables). Policy lookups are a single hash operation. Kernel conntrack integration is also bypassed for pod-to-pod traffic in Cilium's eBPF mode, removing another bottleneck.

The practical effect: In benchmarks published by the Cilium team and independently reproduced, throughput stays flat as service count grows with Cilium, while iptables-based CNIs show roughly linear degradation past ~1000 services. For most AKS clusters under ~200 services, you won't feel this in latency numbers. But you'll feel it during kube-proxy churn (every Service change triggers iptables sync across every node), and in node boot times when the full iptables ruleset has to be re-installed.

Network Policy Depth: Where They Actually Differ

Standard Kubernetes NetworkPolicy is relatively primitive: allow/deny based on pod selector, namespace selector, and port. Both Calico and Cilium extend this significantly, but in different directions.

Calico's Strengths: GlobalNetworkPolicy and BGP

Calico's GlobalNetworkPolicy is the most useful extension for multi-tenant clusters. The example above (default-deny egress globally) is something you simply can't do with standard NetworkPolicy without installing policies in every namespace individually.

Calico also supports entity-based selectors — you can write policies targeting Host, Remote (external IP blocks), or kube-system by entity type rather than constructing CIDR ranges manually.

Cilium's Strengths: L7 Policy and DNS-Awareness

This is where Cilium genuinely leaps ahead. DNS-aware egress policy lets you write rules like "this pod can only reach api.stripe.com" — and Cilium enforces it at the DNS resolution level, not just by CIDR.

1apiVersion: "cilium.io/v2"

2kind: CiliumNetworkPolicy

3metadata:

4 name: payments-service-egress

5spec:

6 endpointSelector:

7 matchLabels:

8 app: payments

9 egress:

10 # Allow DNS

11 - toEndpoints:

12 - matchLabels:

13 "k8s:io.kubernetes.pod.namespace": kube-system

14 "k8s:k8s-app": kube-dns

15 toPorts:

16 - ports:

17 - port: "53"

18 protocol: ANY

19 # Allow Stripe API specifically

20 - toFQDNs:

21 - matchName: "api.stripe.com"

22 toPorts:

23 - ports:

24 - port: "443"

25 protocol: TCPYou cannot write this policy with Calico. You'd have to find Stripe's IP ranges, hardcode CIDRs, and update them when Stripe adds an IP. With Cilium, you write the FQDN and it resolves dynamically.

L7 HTTP policy goes further:

1egress:

2 - toEndpoints:

3 - matchLabels:

4 app: internal-api

5 toPorts:

6 - ports:

7 - port: "8080"

8 protocol: TCP

9 rules:

10 http:

11 - method: GET

12 path: "/api/v1/read.*"This allows GET requests to specific paths only. POST/DELETE to the same endpoint is denied. This is a fundamentally different threat model — one that iptables-based CNIs can't achieve because they operate at L4.

Observability: Hubble vs Nothing

If you're running Calico on AKS, your network visibility story is: Azure Monitor (node-level metrics), NSG flow logs (VNet-level), and kubectl exec into pods to run tcpdump. That's it. Calico itself has no built-in flow visibility tooling.

Cilium ships Hubble — a distributed network observability platform built on eBPF. Hubble gives you:

- Per-flow visibility: every connection between pods, with policy verdict (allowed/denied), latency, and L7 details (HTTP method, status code, gRPC service/method)

- Hubble UI: real-time network map of your cluster's traffic patterns

- Hubble CLI:

hubble observe --namespace payments --type dropshows every dropped packet in the payments namespace with the policy that dropped it - Prometheus metrics:

hubble_flows_processed_total,hubble_drop_total— ready to alert on

# Show all dropped connections in the last 5 minutes

hubble observe --since 5m --verdict DROPPED

# Show HTTP traffic to a specific service

hubble observe --namespace payments --to-service checkout --protocol httpThe debugging experience with Hubble is genuinely transformative compared to iptables. When a developer says "my service can't reach the database," you can look at Hubble, see the exact policy drop with the policy name, and point them to the fix in under 30 seconds. With Calico and iptables, you're doing iptables -L -v -n | grep DROP on the node and trying to correlate packet counts to pod IPs.

Tetragon: Runtime Security That Calico Can't Match

Tetragon is the part of the Cilium ecosystem that goes beyond networking. It's an eBPF-based runtime security tool that can observe and enforce at the syscall level — detecting things like unexpected file writes, process executions, and privilege escalations.

1apiVersion: cilium.io/v1alpha1

2kind: TracingPolicy

3metadata:

4 name: detect-shell-execution

5spec:

6 kprobes:

7 - call: "sys_execve"

8 syscall: true

9 args:

10 - index: 0

11 type: "string"

12 selectors:

13 - matchArgs:

14 - index: 0

15 operator: "Postfix"

16 values:

17 - "/bin/sh"

18 - "/bin/bash"

19 matchActions:

20 - action: SigkillThis policy kills any process that tries to exec a shell inside a pod. No Calico equivalent exists. You'd need a separate runtime security tool (Falco, for example) to get this, and it would be a separate DaemonSet with its own overhead.

Tetragon runs as a Cilium component — same eBPF infrastructure, no additional overhead for the observation layer.

AKS-Specific Gotchas

Windows node pools: This is the clearest Calico advantage. Cilium is Linux-only. If you have Windows node pools (common in .NET shops running AKS), you need Calico or a Windows-compatible CNI. There's no workaround here — Cilium simply won't schedule on Windows nodes.

Azure CNI IP exhaustion: Azure CNI pre-allocates IPs from your subnet for each node. This is a problem independent of whether you use Calico or Cilium, but it's worth noting because both CNIs sit on top of it. If you're hitting subnet exhaustion, look at Azure CNI Overlay mode (preview) rather than changing CNI plugins.

Node pool recreation on migration: As mentioned earlier, migrating from Calico to Cilium on an existing AKS cluster requires creating new node pools. Microsoft doesn't support in-place CNI changes. Plan for this upfront.

AKS managed Cilium: "Azure CNI Powered by Cilium" is managed by Microsoft — they handle upgrades, bug fixes, and support tickets. If you self-install Cilium via Helm on top of Azure CNI, you own the upgrades. The managed option is strongly preferred unless you need features that are ahead of what Microsoft ships.

Hubble in managed mode: When using Azure CNI Powered by Cilium, Hubble is included but you need to enable the Hubble Relay explicitly:

az aks update \

--resource-group myResourceGroup \

--name myAKSCluster \

--enable-hubbleDecision Matrix

Choose Calico if:

- You have Windows node pools (hard requirement)

- Your team has deep Calico expertise and existing policy libraries

- You need

GlobalNetworkPolicyfor cluster-wide baseline policies without moving to Cilium - You're on an existing cluster with Calico and migration pain exceeds the observability benefit

- You're constrained to only Microsoft-supported components and haven't evaluated Azure CNI Powered by Cilium

Choose Cilium if:

- You're creating a new cluster with no migration cost

- You want DNS-aware or L7-aware network policies (payments, external API calls, internal service authorization)

- Hubble observability matters to your team — network debugging is a recurring pain point

- You want Tetragon for runtime security without adding another tool

- Your cluster has or will exceed ~500 services (eBPF scale advantage becomes meaningful)

- You're adopting a service mesh and want Cilium's transparent L7 integration

For most greenfield AKS clusters in 2026, Cilium with Azure CNI Powered by Cilium is the right default. The observability improvement alone is worth it — Hubble has saved my team hours on networking investigations that would have been 30-minute iptables archaeology sessions.

The caveat is Windows nodes. If you have them now or plan to add them, you're on Calico until Cilium adds Windows support, which isn't on the near-term roadmap.

Further Reading

If you want to go deeper on eBPF and what makes Cilium's dataplane work, see my post on eBPF for Platform Engineers: Cilium, Hubble, and Tetragon Without the Hype.

For network policy patterns specifically around RBAC and least privilege for workload identities, Kubernetes RBAC in Practice covers the access control model that your network policy layer should mirror.

If you're building out an AKS platform and want a second opinion on CNI choice, networking architecture, or policy strategy, reach out via the contact page — happy to talk through your specific constraints.

Frequently Asked Questions

Can I migrate from Calico to Cilium on an existing AKS cluster?

Yes, but not in place. Microsoft requires you to create new node pools with the Cilium configuration enabled and then migrate your workloads. There is no automated conversion tool; you will need to re-verify your network policies as you transition.

Does Cilium replace Azure CNI?

No. In most AKS configurations, Cilium works with Azure CNI. Azure CNI handles pod IP assignment from your VNet, while Cilium (in eBPF mode) handles the dataplane and network policy enforcement.

Is Hubble's performance impact significant?

Hubble is extremely efficient because it runs as a lightweight eBPF program directly in the kernel. In our experience, its CPU overhead is negligible compared to the visibility it provides. For most AKS clusters, there is no noticeable latency penalty for having Hubble enabled.

Does Calico support eBPF on AKS?

Calico does have an eBPF dataplane, but on AKS, the "managed" integration (using the --network-policy calico flag) primarily uses the standard iptables-based dataplane. If you want the full eBPF experience on AKS, the built-in Cilium integration is currently the most mature and easiest to maintain.