Docker Swarm vs Kubernetes vs Nomad: Choosing Your Container Orchestrator in 2026

Kubernetes wins by default in most conversations. But Docker Swarm and Nomad are still running real workloads in production. Here's an honest look at all three — including when Kubernetes is the wrong answer.

The honest answer to "should I use Kubernetes?" is sometimes no.

If you are currently evaluating your stack, our guide on Choosing the Right DevOps Tools provides a complementary framework for making these high-stakes infrastructure decisions. Nomad runs workloads at scale for HashiCorp, Cloudflare, and dozens of enterprises. Docker Swarm is still the right call for a small team that doesn't want to learn a system with 50+ resource types just to deploy three services. The pressure to default to Kubernetes — from conference talks, job postings, and CNCF marketing — is real but not always correct.

I've run all three in production at different points. Kubernetes for a platform team managing 200+ microservices across multiple environments. Swarm for a startup that needed something working in an afternoon. Nomad for a client running mixed container and non-container workloads on bare metal. Each was the right tool for that context.

This post compares all three honestly — architecture, operational complexity, scaling limits, stateful workload support, and the decision framework I'd use if I were choosing today.

The Problem All Three Solve

Before the comparison, it's worth being precise about what orchestration actually does, because the marketing fluff obscures it.

All three orchestrators handle the same core problems:

- Scheduling: which container runs on which machine?

- Health management: restart failed containers, replace unhealthy instances

- Rolling deployments: update running services without downtime

- Service discovery: how does service A find service B's current IP/port?

- Secret injection: how do secrets reach containers without being in environment variables or images?

- Resource allocation: bin-pack workloads onto nodes without overcommitting

Everything beyond this core is ecosystem and polish. Kubernetes wins on ecosystem breadth. Swarm wins on simplicity. Nomad wins on flexibility.

Docker Swarm

Architecture

Swarm is built directly into Docker Engine — no separate installation, no control plane components to manage. You run docker swarm init on one node and docker swarm join on the rest.

Managers maintain cluster state via Raft consensus. You need an odd number of managers (3 or 5) for HA — the same quorum rules as etcd. Workers run tasks (containers). The distinction between manager and worker is soft; managers can run containers too.

Networking is built-in via VXLAN overlay — containers across nodes can communicate using service names as DNS. No CNI plugin required, no network add-on to install or maintain.

The deployment model maps almost directly from Docker Compose:

1# docker-compose.yml → docker stack deploy

2version: "3.9"

3services:

4 api:

5 image: myapp:1.2.3

6 deploy:

7 replicas: 3

8 update_config:

9 parallelism: 1

10 delay: 10s

11 failure_action: rollback

12 restart_policy:

13 condition: on-failure

14 ports:

15 - "8080:8080"

16 secrets:

17 - db_password

18

19secrets:

20 db_password:

21 external: truedocker stack deploy -c docker-compose.yml myapp and you're running. The entire mental model is Docker Compose with HA.

Strengths

- Operational simplicity: no YAML resource types to learn beyond what you already know from Compose. A developer who knows Docker can understand a Swarm deployment in an hour.

- Built-in load balancing: Swarm includes a built-in routing mesh — a published port on any node routes to any healthy replica. No ingress controller required.

- Secrets management:

docker secret createstores secrets in the Raft log (encrypted at rest), mounted as tmpfs files in containers. Simple and secure for small deployments. - Zero extra infrastructure: Swarm runs on the same Docker Engine already on your nodes.

Weaknesses

Effectively unmaintained: Docker Inc. has deprioritized Swarm since Docker Desktop became their focus. The Swarm GitHub issues contain years-old bugs with no response. You're not getting new features or significant fixes.

No horizontal autoscaling: There's no equivalent to HPA. Scaling is manual (docker service scale myapp=5) or through custom scripting.

Limited stateful support: Volumes in Swarm are basic Docker volumes — not portable across nodes by default. Running stateful workloads (databases, queues) requires third-party volume drivers or keeping stateful services pinned to specific nodes, which defeats the purpose of orchestration.

No namespace equivalent: All services share a flat namespace (stack names provide some separation, but there's no RBAC, no resource quotas, no multi-tenancy).

Small ecosystem: No operators, no custom resource types, no Helm equivalent (though Compose files are simpler to write than Helm charts). The CNCF ecosystem doesn't target Swarm.

Best For

- Teams with fewer than 10 services

- Proof of concepts or staging environments where Kubernetes overhead isn't justified

- Teams 100% committed to Docker Compose workflows who want minimal operational change

- Self-hosted deployments where you don't want to run a Kubernetes control plane

Kubernetes

Architecture

Kubernetes has a genuinely complex architecture. The control plane is multiple separate processes:

This complexity exists for a reason: each component can be scaled, upgraded, and replaced independently. The scheduler is pluggable. The API server is the single source of truth for all cluster state, which enables the watch-based reconciliation pattern that makes operators and custom controllers possible.

On managed Kubernetes (EKS, GKE, AKS), the control plane is abstracted away. You pay per hour and don't touch etcd. This dramatically reduces the operational burden.

Strengths

Ecosystem breadth: Every meaningful infrastructure tool has a Kubernetes operator, a Helm chart, or a Kubernetes-native interface. Prometheus, Istio, Argo CD, Vault, Cert-Manager, Crossplane, KEDA, Karpenter — all of this runs on Kubernetes and nowhere else. Choosing Kubernetes means choosing access to the entire CNCF landscape.

Managed offerings: EKS, GKE, and AKS abstract the control plane. You don't manage etcd backups, API server upgrades, or control plane HA. This is the single biggest operational advantage of Kubernetes in 2026.

Autoscaling maturity: HPA, VPA, KEDA (event-driven), and Karpenter (node-level) give you autoscaling at every layer. Swarm and Nomad don't have equivalents that are this composable.

Multi-tenancy via namespaces + RBAC: Namespaces, ResourceQuota, LimitRange, and RBAC combine to give you genuine multi-tenancy — multiple teams sharing a cluster with resource isolation and access controls.

Stateful workloads: CSI drivers, StatefulSets, PodDisruptionBudgets, and a rich ecosystem of operators (CloudNativePG for Postgres, Strimzi for Kafka, Bitnami charts for Redis) make stateful workloads tractable on Kubernetes in a way that isn't true for Swarm or bare Nomad.

Weaknesses

YAML sprawl: A minimal production Deployment in Kubernetes is ~60 lines of YAML before you add resource limits, probes, affinity rules, pod disruption budgets, and network policies. Helm helps but adds its own complexity. The cognitive overhead of K8s resource types (there are 50+ core types, plus dozens of CRDs per add-on) is real.

Overkill for small deployments: If you're running 3 services for a team of 2 developers, Kubernetes is a maintenance burden that returns no value. The operational cost — upgrades, node management, debugging CrashLoopBackOff, understanding why pods are Pending — is real even on managed K8s.

Learning curve: The Kubernetes model (desired state reconciliation, controllers, informers, pods vs deployments vs replicasets) takes months to internalize. A new engineer joining your team can contribute to a Swarm deployment in days; the Kubernetes learning curve is closer to 3-6 months before they're comfortable.

Best For

- Teams running more than ~20 services

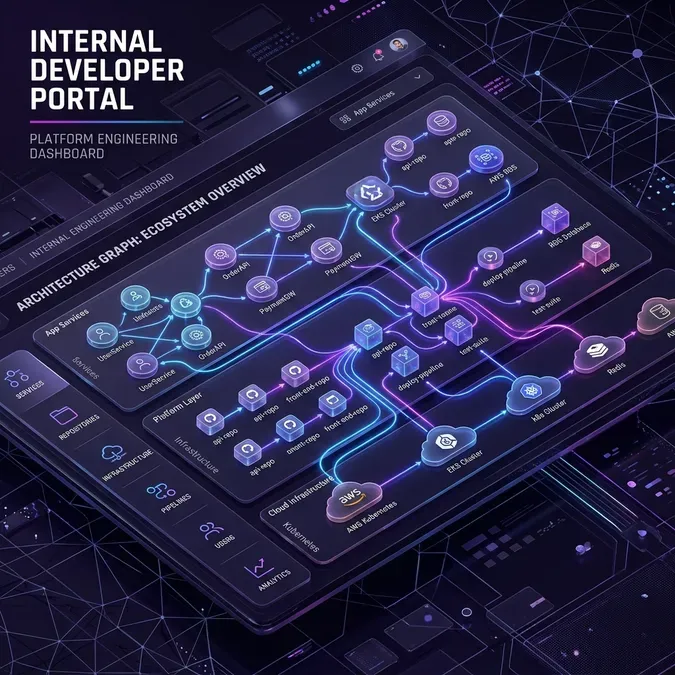

- Multi-team platforms (platform engineering teams building an IDP)

- Organizations that need the CNCF ecosystem (service mesh, GitOps, observability, cost management)

- Any shop that wants managed cloud-hosted control planes

- Teams that will scale their engineering organization and need to support dozens of developers

Nomad

Architecture

Nomad is HashiCorp's orchestrator, and it occupies a genuinely different design space from both Swarm and Kubernetes.

Servers maintain cluster state via Raft (same as Swarm managers or etcd in K8s). Clients run tasks. What makes Nomad distinct is the task driver model — Nomad can run containers (Docker, Podman), raw executables, Java applications, VMs (via QEMU), and WASM workloads. It's a general-purpose workload scheduler, not a container-specific one.

A Nomad job spec is written in HCL, not YAML:

1job "api-service" {

2 datacenters = ["dc1", "dc2"]

3 type = "service"

4

5 group "api" {

6 count = 3

7

8 network {

9 port "http" { to = 8080 }

10 }

11

12 service {

13 name = "api-service"

14 port = "http"

15 provider = "consul"

16

17 check {

18 type = "http"

19 path = "/health"

20 interval = "10s"

21 timeout = "2s"

22 }

23 }

24

25 task "server" {

26 driver = "docker"

27

28 config {

29 image = "myapp:1.2.3"

30 ports = ["http"]

31 }

32

33 resources {

34 cpu = 500

35 memory = 256

36 }

37

38 template {

39 data = "{{ with secret \"secret/data/api\" }}{{ .Data.data.db_password }}{{ end }}"

40 destination = "secrets/env"

41 env = true

42 }

43 }

44 }

45}Notice the template block pulling a secret directly from Vault — native integration without an operator, sidecar, or custom resource type.

Strengths

Mixed workloads: This is Nomad's defining strength. If you're running containers alongside legacy Java apps, batch jobs, or VMs on bare metal, Nomad schedules all of them with one tool. Kubernetes can run non-containers via virtual kubelet or custom runtime shims, but it's not a first-class use case. In Nomad, a Docker task and a raw_exec task are peers.

Simplicity relative to Kubernetes: Nomad has far fewer concepts. There are job types (service, batch, system, sysbatch), groups, tasks, and allocation. No Deployment vs ReplicaSet vs Pod hierarchy, no ConfigMaps vs Secrets vs projected volumes distinction. The mental model fits in your head.

HashiCorp stack integration: If you're already using Consul and Vault, Nomad integrates natively. Service discovery uses Consul directly — no Kubernetes DNS, no CoreDNS configuration, no Service resource. Secrets use Vault directly — no External Secrets Operator, no CSI driver, no Vault Agent sidecar. For HashiCorp shops, this is a genuine operational simplification.

Multi-region by design: Nomad's federation model (datacenters in job specs, server federation) is first-class. Running workloads across multiple datacenters or cloud regions is a job config change, not an architecture redesign.

Upgrade simplicity: Nomad server upgrades are rolling. You replace one server binary at a time. No etcd backup rituals, no kubeadm upgrade dance, no control plane drain procedures.

Weaknesses

Smaller ecosystem: Nomad doesn't have the CNCF backing. Popular Kubernetes operators (Strimzi, CloudNativePG, cert-manager) don't run on Nomad. If you want Kafka on Nomad, you're writing your own job spec, not installing an operator. This matters for stateful workloads in particular.

No managed cloud option: AWS, GCP, and Azure don't offer managed Nomad. HashiCorp Cloud Platform (HCP) offers managed Nomad, but it's not as mature or widely adopted as EKS/GKE/AKS. Self-managing Nomad servers is straightforward, but it's more work than managed Kubernetes.

Weaker stateful story: Nomad's volume support is good (CSI volumes are supported) but the operator ecosystem for stateful workloads is thin. Running Postgres on Nomad is a manual exercise; running it on Kubernetes with CloudNativePG is pushing a Helm chart.

Hiring pool: In most markets, Nomad expertise is rare. Finding engineers who know Nomad well enough to operate a production cluster is harder than finding Kubernetes engineers.

Head-to-Head Comparison

| Feature | Docker Swarm | Kubernetes | Nomad |

|---|---|---|---|

| Learning curve | Low | High | Medium |

| Setup complexity | Minimal | High (self-managed) / Low (managed) | Medium |

| Non-container workloads | ❌ | Limited | ✅ First-class |

| Managed cloud option | ❌ | ✅ EKS/GKE/AKS | Partial (HCP) |

| Autoscaling | Manual only | HPA/VPA/KEDA/Karpenter | Basic (with scripts) |

| Stateful workload support | Poor | Excellent (operators) | Good (CSI, manual) |

| Service discovery | Built-in DNS | CoreDNS + Service | Consul |

| Secrets | Built-in (Raft) | K8s Secrets / ESO / Vault | Vault (native) |

| Network policy | None built-in | CNI-dependent | Consul intentions |

| Multi-tenancy | Minimal | Full (NS + RBAC) | Partial (namespaces) |

| Observability ecosystem | None | Extensive | Prometheus (manual) |

| GitOps tooling | None | Argo CD / Flux | Limited |

| Community size | Declining | Large | Growing |

| Maintenance status | Maintenance mode | Active | Active |

Operational Complexity: Day 1, Day 2, Day 365

The comparison tables above miss the most important variable: how does each system feel to operate over time?

Day 1 (getting a service deployed):

- Swarm:

docker stack deploy. Done in minutes. If you know Docker Compose, you already know this. - Kubernetes: Write a Deployment, Service, and Ingress. Debug why the pod is Pending (missing resource limit? taint mismatch?). Figure out ingress controller configuration. 2-4 hours for your first service.

- Nomad: Write an HCL job spec. Run

nomad job run. Debug Consul service registration. 1-2 hours for your first service.

Day 2 (rolling update and rollback):

- Swarm:

docker service update --image myapp:1.3.0. Rollback:docker service rollback myapp. Clean and simple. - Kubernetes:

kubectl set image deployment/myapp server=myapp:1.3.0or update the Deployment YAML. Rollback:kubectl rollout undo deployment/myapp. Clean if you use GitOps, messy if you don't. - Nomad: Update the job spec version, run

nomad job run. Rollback:nomad job revert.

Day 365 (upgrading the orchestrator itself):

- Swarm: Update Docker Engine on each node. No control plane ceremony. Rolling update is straightforward.

- Kubernetes: Version upgrades require coordinating API deprecations (check

kubectl api-versions), upgrading the control plane first (on managed K8s, click a button), then draining and upgrading node pools. On self-managed K8s, this is a significant project. - Nomad: Replace server binaries one at a time. Clients can be upgraded without service interruption. HCL job spec changes are generally backward-compatible.

Stateful Workloads: The Biggest Gap

This is where the choice really matters for production systems.

Swarm: Essentially unsuited for stateful workloads. Volumes don't move with services, there are no StatefulSet semantics (stable network identity, ordered startup/shutdown), and there's no CSI driver ecosystem.

Kubernetes: The richest stateful story of the three. StatefulSets give stable pod names (postgres-0, postgres-1) and stable network identities. PVCs with CSI drivers abstract the storage layer. Operators (CloudNativePG, Strimzi, Redis Operator) handle complex lifecycle management — backups, failover, schema migrations. If you're running Postgres, Kafka, or Elasticsearch on Kubernetes, there's a mature operator for it.

Nomad: CSI volume support exists and works well. Nomad's StatefulSet equivalent is sticky task groups (using spread and constraint stanzas to ensure replica placement and stable allocation IDs). It works, but you write more manual configuration and there's no operator ecosystem to lean on. Running Postgres on Nomad means you're managing failover yourself or using an external solution like Patroni.

The Ecosystem Tax

Kubernetes' CNCF ecosystem is both its greatest strength and a trap.

The strength is obvious: there's a Kubernetes-native tool for every problem. Cert-Manager for certificates, External Secrets Operator for secrets sync, Argo CD for GitOps, KEDA for autoscaling, Karpenter for node provisioning. Each of these is well-maintained, well-documented, and widely used.

The trap: each of these tools is also a CRD surface to manage, a Helm upgrade to track, a RBAC policy to write, and an operator pod to monitor. A mature Kubernetes cluster often runs 15-20 add-on operators. Each one is a potential source of version conflicts, breaking changes, and operational surprises. I've seen clusters where the add-on burden exceeds the actual application workload in complexity.

Nomad and Swarm don't have this problem because they don't have the ecosystem. That's not entirely a negative.

Migration Paths

Swarm → Kubernetes: Kompose converts Docker Compose files to Kubernetes manifests. It works well for stateless services; stateful services need manual review. Plan for several weeks of migration time on a non-trivial Swarm deployment.

Nomad → Kubernetes: There's no automated migration tool. You rewrite job specs as Deployments + Services + Ingresses. The Consul-to-CoreDNS and Vault-native-to-ESO transitions are the hardest parts. For a small cluster, plan 2-4 weeks. For a large one, plan a parallel-run migration over months.

Kubernetes → Nomad: This happens more often than people admit, usually at organizations that find Kubernetes too complex for their workload mix (mixed containers + VMs, small team, no need for the CNCF ecosystem). The migration is similarly manual.

Decision Framework

Choose Docker Swarm if:

- You have fewer than 10 services

- Your team already knows Docker Compose and no one has time to learn Kubernetes

- You're prototyping or running non-production infrastructure

- You accept that Swarm is effectively in maintenance mode and you'll migrate eventually

Choose Nomad if:

- You have mixed workloads: containers running alongside Java apps, batch jobs, VMs, or WASM

- You're invested in the HashiCorp stack (Consul + Vault) and want native integration without operators

- You're running multi-datacenter on bare metal or self-managed VMs where managed Kubernetes isn't an option

- Your team is philosophically allergic to the Kubernetes YAML surface area

- You need multi-region scheduling as a first-class concern

Choose Kubernetes if:

- You need the CNCF ecosystem (service mesh, GitOps, policy engines, cost management)

- You want a managed control plane from your cloud provider (EKS/GKE/AKS)

- You're a multi-team platform — multiple product teams deploying to shared infrastructure

- You're running more than ~20 services and need proper multi-tenancy, RBAC, and resource quotas

- Your team needs to hire engineers who already know Kubernetes

For most engineering organizations in 2026 with more than 5 services and access to a managed Kubernetes offering, Kubernetes is the right choice — not because it's technically superior in every way, but because the operational burden is managed, the ecosystem is unmatched, and the hiring pool is the deepest of the three.

But I'd rather work on a well-operated Nomad cluster than a poorly operated Kubernetes cluster. The tool isn't the variable. The operational discipline is.

Further Reading

Once you've chosen Kubernetes and are looking at how to deploy to it consistently, Helm Best Practices for Production covers the packaging patterns that keep large K8s deployments manageable.

For autoscaling on Kubernetes specifically, KEDA: Event-Driven Autoscaling for Kubernetes covers how to scale based on queue depth, Prometheus metrics, and external events — far beyond what HPA alone can do.

For choosing the right CI/CD tool to deploy to whichever orchestrator you pick, Choosing the Right CI/CD Pipeline for Microservices walks through the evaluation framework.

If you're evaluating orchestrators for a specific architecture — mixed bare metal, multi-cloud, or a team migrating away from Swarm — reach out via the contact page. Happy to help think through the tradeoffs for your context.

Frequently Asked Questions

Is Docker Swarm still production-ready in 2026?

Yes, for specific use cases. If you have a small, stable set of services and want to minimize operational overhead, Swarm remains a solid choice. However, keep in mind that it is effectively in maintenance mode, and you may eventually need to migrate to Kubernetes or Nomad as your needs grow.

Why choose Nomad over Kubernetes?

Nomad excels in environments with "mixed workloads" where you need to orchestrate containers alongside legacy binaries, Java apps, or VMs. It is also significantly less complex to operate than a self-managed Kubernetes cluster and integrates natively with the HashiCorp stack (Vault/Consul).

Does Kubernetes always require a platform team?

For small deployments on managed services like EKS or GKE, a single engineer can handle the basics. However, as you add service meshes, GitOps, and complex security policies, the "Kubernetes tax" grows. Once you pass ~20-30 services, having dedicated platform engineering focus becomes a necessity.

Can I run stateful databases on these orchestrators?

Kubernetes is the clear winner for stateful workloads due to the mature Operator ecosystem (e.g., CloudNativePG for Postgres). Nomad supports CSI volumes and can run databases, but requires more manual lift. Docker Swarm is generally unsuited for stateful workloads that require portable storage.